-

ALDF – Average Logarithmic Distance Frequency [ statistics ]

a modified frequency that prevents the result to be excessively influenced by one part of the corpus (e.g. one or more documents) which contains a high concentration of the token. If the token is evenly distributed across the corpus, ALDF and absolute frequency will be similar or identical. In comparison with ARF (Average Reduced Frequency), ALDF is calculated from the average distances between the tokens, not the frequency of the token. see also ALDF definition -

alignment

Alignment is a term used in connection with parallel corpora. A parallel corpus consists of a text and its translation into one or more languages. Parallel corpora need to be divided into segments. A segment usually corresponds to a sentence. Alignment refers to information that tells Sketch Engine which segment (sentence) in one language is the translation of which segment (sentence) in another language. The easiest way to provide the alignment information to Sketch Engine is to upload the data in a tabular format (e.g. Excel). Alternatives that can account for more complex alignment are also available. see Build a parallel corpus -

ARF – Average Reduced Frequency [ statistics ]

a modified frequency which prevents the result to be excessively influenced by one part of the corpus (e.g. one or more documents) which contains a high concentration of the token. If the token is evenly distributed across the corpus, ARF and absolute frequency will be similar or identical. In comparison with ALDF (Average Logarithmic Distance Frequency), ARF is calculated from the frequency of the token, not distances between the tokens. see also ARF definition -

Attribute

An attribute can refer to: A possitional attribute - information added to each token in a corpus, e.g. its lemma or part of speech. A structure attribute - information added to a structure in a corpus, often called metadata -

CAT tool

A CAT tool stands for a computer assisted translation tool. It is software that helps translators maintain consistency in terminology across their translation jobs and also aids the translation process by suggesting (or translating automatically) passages (segments) which the translator already translated in the past. (more…) -

cluster

a process of creating groups of words in the thesaurus or word sketch. Words are connected to their shared collocational behavior. See more on the Clustering Neighbours documentation -

collocate

a part of a collocation that is not the node. A collocate is dependent on the node. The collocate strong and the node wind make up the collocation strong wind

The most typical collocates for every word in the language can be generated with the word sketch tool.collocation collocate node strong wind icy wind cold wind -

collocation

a collocation is a sequence or combination of words that occur together more often than would be expected by chance (from Wikipedia|Collocation) A collocation, e.g. fatal error, typically consists of a node (error) and a collocate (fatal). (more…) -

comparable corpus [ corpus-types ]

A comparable corpus is a corpus consisting of texts from the same domain in more languages. In contrast to a parallel corpus, the texts are not translations of each other and belong to the same domain with the same metadata. An example of a comparable corpus is corpus made from Wikipedia. -

compile

A corpus compilation refers to the processing of the corpus data (text) with the tools available for the language and converting the text into a corpus.Only a compiled corpus can be searched. see corpus compilation -

concordance [ feature ]

-

concordancer [ feature ]

A concordancer is a tool (a piece of software) which searches a text corpus and displays a concordance. (more…) -

CoNLL format

CoNLL format is a specific format of the vertical file that represents a syntactic parse tree. (more…) -

cooccurrence [ text-analysis ]

cooccurrence or co-occurrence is a term which expresses how often two terms from a corpus occur alongside each other in a certain order. It usually indicates words which together create a new meaning. We call them as phraseme or multi-word expression, e.g. black sheep or get on. Sketch Engine help to find such words with using the word sketch tool or the collocation search. Read more about further tools for text analysis. -

corpus

A corpus is a large collection of authentic texts used for studying language or generating linguistic data. Modern corpora contain texts whose total length is billions or dozens of billions of words. A corpus is usually annotated (=word are labelled with information about the part of speech and grammatical category). The terms corpus and text corpus and language corpus are interchangeable. Using a corpus for any type of linguistic or language oriented work ensures that the outcomes reflect the real use of the language. more on copora» -

corpus architect

an intuitive tool inside Sketch Engine for creating corpora from documents or the Web which does not require any expert knowledge. See the create your own corpus page. -

corpus manager

a program used to manage text corpora, i.e. to build, edit, annotate and search corpora. Sketch Engine is the user interface to the corpus manager Manatee. -

CQL

The Corpus Query Language is a code used to set criteria for complex searches which cannot be carried out using the standard user interface controls. The criteria may include words or lemmas but also tags and other attributes, text types or structures. Conditions can be set for optional tokens or token repetition. -

CSV

a type of plain text document used for saving tabular data. It is seamlessly accepted by a large variety of applications and is therefore ideal for exporting Sketch Engine results to be used in other software. CSV can be opened directly in Microsoft Excel, Open Office, Google Documents and many others. -

deduplication

Deduplication is a process of removing duplicated content from a corpus. Only the first instance of the text is preserved, any subsequent (duplicated) occurrences are removed. (more…) -

disambiguation

a process of identifying meanings of words (lemma, part of speech) when a word has multiple meanings. The result of this process is one word with one meaning. -

distributional thesaurus [ feature ]

an automatically produced thesaurus which identifies words that occur in similar contexts as the target word. It draws on the theory of distributional semantics. (more…) -

document

A document (called a file in old corpora) in Sketch Engine refers to any file, document or webpage the corpus is made up of. If a user uploads a file (such as .doc, .pdf, .txt), each of the files becomes a corpus document. If the user downloads content from the web, each web page becomes a corpus document. (more…) -

document frequency (docf) [ statistics ]

The document frequency is the number of documents in which the token or phrase appears. If the corpus has 100 documents and 2 documents contain the word city: document number 7 contains 17 instances of city, document number 31 contains 6 instances of city, the document frequency of city is 2, because 2 documents contain the word. (more…) -

escaping

In regular expressions, escaping refers to canceling the special function of certain characters. It is typically necessary when searching for punctuation. (more…) -

focus corpus

In keyword and term extraction, the focus corpus is the corpus from which keywords and terms are extracted. Compare reference corpus. -

frequency [ statistics ]

Frequency (also absolute frequency) refers to the number of occurrences or hits. If a word, phrase, tag etc. has a frequency of 10, it means it was found 10 times or it exists 10 times. It is an absolute figure. It is not calculated using a specific formula. compare frequency per million see also ARF document frequency Statistics used in Sketch Engine -

GDEX

Good Dictionary Examples is a technology in Sketch Engine which can identify automatically sentences which are suitable as dictionary example sentences or as teaching examples, i.e. are illustrative and representative. (more…) -

gender lemma [ attribute ]

The gender lemma is an attribute used in connection with term extraction. Its purpose is to display terminology in the correct word form in languages which observe the agreement in gender between adjectives and nouns. (more…) -

global subcorpus

a subcorpus that is shared with all users. See instructions how to set the subcorpus shared all users» -

glue

A glue <g/> is a special structure inserted into a corpus to tell Sketch Engine that two tokens, which would otherwise be displayed with a space in between, should actually be displayed without a space. Typically do and n't will have glue between them to be displayed as don't. A glue does not have any other fuction, it is inserted for technical reasons only. It can, however, be used in CQL searches if there is a use case. -

Grammatical relation

A grammatical relation, or gramrel, refers to one column in the word sketch. Each column represents a category which displays collocates with the same relation to the search word, e.g. subjects of a verb or modifiers of a noun. Some columns may also display the usage statistics of the search word instead of collocates, e.g. the statistics of noun cases or verbal tenses of the search word. -

header field

various types of information associated with documents of a corpus, e.g. a corpus with documents from different domains can be structured according to these domains with a usage of header fields <doc domain> and their values "nameofdomain" = <doc domain="nameofdomain"> -

keyword

(Not to be confused with terms which is a related concept.) Keywords is a concept used in connection with Keyword & Term extraction. Keywords are words (single-token items), that appear more frequently in the focus corpus than in the reference corpus. They are used to identify what is specific to a corpus (focus corpus) or its subcorpus in comparison with another corpus (reference corpus) or its subcorpus. It is recommended to use terms, not keywords, for the purpose of terminology extraction. (more…) -

KWIC

KWIC is the acronym for Key Word in Context and refers to the red text highlighted in a concordance. The red text is the result that matches the search criteria. Such a concordance is referred to as a KWIC concordance. (more…) -

lc [ attribute ]

(also referred to as word_lc, word lowercase or word form lowercase) is a positional attribute assigned to of each token in the corpus. The lc attribute is a lowercased version of the word attribute: John becomes john, Apple becomes apple, BE becomes be. The lc attribute makes the upper case and lowercase version of each token identical. The lc attribute is used for case insensitive searching and analysis see also word form lemma (lowercase) list of attributes -

learner corpus [ corpus-types ]

A collection of texts produced by learners of a language used to study errors and mistakes made by learners of languages. Learner corpora in Sketch Engine can use both error and correction annotation. A special search interface is available to search by the former or the latter or both. see also Setting up a learner corpus -

lemma [ attribute ]

Lemma is a positional attribute. It is the basic form of a word, typically the form found in dictionaries. A lemmatized corpus allows for searching for the basic form and include all forms of the word in the result, e.g. searching for lemma go will find go, goes, went, going, gone. (more…) -

lemma_lc [ attribute ]

lemma_lc is a positional attribute. It is a lemma converted to lowercase. apple and Apple are treated as the same thing. It is used for case insensitive searching and case insensitive analysis. see lemma -

Lemmatization

Lemmatization is a process of assigning a lemma to each word form in a corpus using an automatic tool called a lemmatizer. Lemmatization bring the benefit of searching for a base form of a word and getting all the derived forms in the result, e.g. searching for go will also find goes, went, gone, going. See also PoS tagger stemming -

lempos [ attribute ]

Lempos is a positional attribute, i.e. an attribute assigned to each token in the corpus. It is a combination of lemma and part of speech (pos) consisting of the lemma, hyphen and a one-letter abbreviation of the part of speech, eg. go-v, house-n. The part of speech abbreviations differ between corpora. Lempos is case sensitive, house-n is different from House-n. see also lempos_lc lemma list of attributes -

lempos_lc [ attribute ]

lempos_lc is a positional attribute. It is a lowercased version of lempos. All uppercase letters are converted to lowercase, thus House-n becomes identical with house-n. It is used for case insensitive searching and analysis. see also lempos list of attributes -

likelihood [ statistics ]

a function of parameters of a statistical model, it plays a key role in statistical inference and is the basis for the log-likelihood function. see Statistics in Sketch Engine -

log-likelihood [ statistics ]

one of the functions used in computed statistics of Sketch Engine. It is the association measures based on the likelihood function, using in tests for significance (see the log-likelihood calculator and more details) -

logDice [ statistics ]

a statistic measure for identifying co-occurrence (=two items appearing together). Sketch Engine uses it to identify collocations. It expresses the typicality (or strength) of the collocation. It is used in the word sketch feature and also when computing collocations from a concordance. (more…) -

Longest-commonest match

The longest-commonest match (LCM) was coined by Adam Kilgarriff to name the most common realisation of a collocation, i.e. the chunk of language in which the collocation appears most frequently. The longest-commonest match is part of the word sketch result screen to facilitate the understanding of how the collocation typically behaves. (more…) -

longtag [ attribute ]

Longtag is a detailed part-of-speech tag which usually contains more information than tag. Some corpora have tags containing only basic information on parts of speech and also attribute longtags consist of detailed grammatical information such as case, number, gender, etc. The longtangs are available in Estonian corpus etTenTen or Turkis corpus trTenTen. -

Macro

Macro is a concordance feature that automates your usual concordance operations. Macros let you save all the actions applied on the concordance and carry them out automatically on future concordances. (more…) -

metadata

information about the texts in the corpus: for example, year of publication, author name, publishing house, medium (written, spoken), register (formal, informal) etc. Metadata are automatically converted to text types in Sketch Engine. see Annotate a corpus -

MI Score [ statistics ]

The Mutual Information score expresses the extent to which words co-occur compared to the number of times they appear separately. MI Score is affected strongly by the frequency, low-frequency words tend to reach a high MI score which may be misleading. (more…) -

minimum sensitivity [ statistics ]

a statistics measure similar to logDice which is the minimum of the two following numbers:

- the number of co-occurrences divided by the frequency of the collocate

- the number of co-occurrences divided by the frequency of the node word

The minimum sensitivity number grows with a high number of co-occurrences and falls with a high number of occurrences of the individual words (node word or collocate).

-

multilevel list

a list sorted at more than one level e.g. a frequency list sorted by word form followed by lemma and then tag, see this multilevel list in the BAWE corpus. -

n-gram

is a sequence of items (bigram = 2 items , trigram = 3 items ...n-gram = n items). An item can refer to anything (letter, digit, syllable, token, word or others) . In the context of corpora and corpus linguistics, n-grams typically refer to tokens (or words). In linguistics, n-grams are sometimes referred to as MWEs, i.e. multiword expressions. (more…) -

node

(talking about collocations) central word in a collocation, e.g. strong wind consists of the collocate strong and the node wind (talking about concordances) the search word or phrase, sometimes called a query, appears in the centre of a KWIC concordance or highlighted in other types of concordances -

non-word

Non-words (also spelt nonwords) are tokens which do not start with a letter of the alphabet. Examples of non-words are numbers, punctuation but also tokens such as 25-hour, 16-year-old, !mportant, 3D. Tokens such as post-1945, mp3 or CO2 are words because they start with a letter. The regular expression Sketch Engine users to identify non-words is [^[:alpha:]].* Compare word. -

overall score [ statistics ]

score of the relation based on logDice in word sketches. The score is displayed in the header of each column of the relation. -

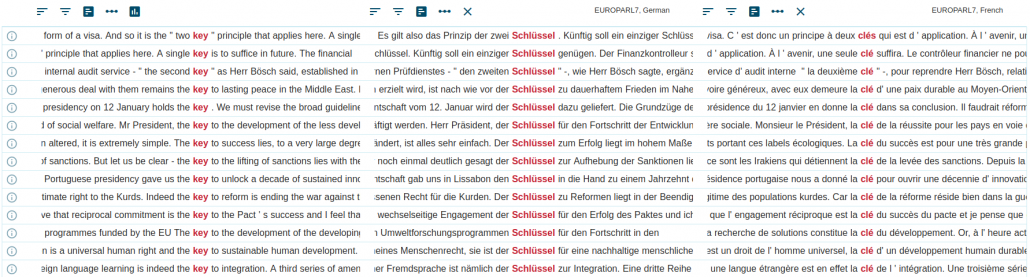

parallel corpus [ corpus-types ]

A parallel corpus consists of the same text translated into one or more languages. The texts are aligned (matching segments, usual sentences, are linked). The corpus allows searches in one or both languages to look up or compare translations.

-

PoS

part of speech, some typical examples of parts of speech are: noun, adjective, verb, adverb etc. -

POS tag [ attribute ]

A POS tag is the same as tag. -

POS tagger

POS (part of speech) tagging is a process of annotating each token with a tag carrying information about the part of speech and often also morphological and grammatical information such as number, gender, case, tense etc. The automatic tagging tool is called a tagger or POS tagger. See also lemmatization stemming -

positional attribute

A positional attribute is information added to each token in a corpus, typically its lemma or tag. (more…) -

preloaded corpus [ corpus-types ]

a ready-to-use corpus included in Sketch Engine subscription or Trial access, not created by a user, e.g. British National Corpus -

prevertical file

A prevertical file is a pain text file that contains the corpus text and structures. Usually, it is a source file for creating vertical files which are created by the tokenization process from the prevertical. (more…) -

query

a sequence of characters or words or their combinations inputed by the user in order to retrieve a concordance. Often, the word query is not restricted to the concordance only but can also refer to any type of search or criteria uses in connection with any Sketch Engine feature, i.e. Word Sketch, thesaurus, word list etc. -

reference corpus

A reference corpus is used in keyword extraction and term extraction. A reference corpus is a corpus to which the focus corpus is compared. When using the Keywords & Terms tool, a reference corpus is preselected but the user can use a different corpus as a reference corpora. The reference corpus can but does not have to be the same for keywords and for terms. A reference corpus can also be used with n-grams to identify n-grams typical of the focus corpus in comparison with the reference corpus. In the word sketch, a reference corpus is used to identify key collocations when using the AS A LIST option in the word sketch. see also term keyword term extraction (definition) term extraction (quick start guide) -

regular expressions

a collection of special symbols that can be used to search for patterns rather than specific characters, e.g. to find all words starting, containing or ending in a specific sequence of characters, for example .*tion will find all words ending in tion and having an unlimited number of characters at the beginning read more» -

relative frequency, frequency per million [ statistics ]

(also called freq/mill in the interface) is a number of occurrences of an item per million tokens, also called i.p.m. (instances per million). It is used to compare frequencies between corpora (or datasets) of different sizes.Formula

number of hits : corpus size in millions of tokens = frequency per million (an alternative calculation producing the same result) raw frequency : corpus size in tokens × 1000000 = frequency per million (more…) -

relative text type frequency

(also called Relative density in the interface) Relative text type frequency compares the frequency in a specific text type to the frequency in the whole corpus. It shows how typical the word(s) is of a specific text type, e.g. of the spoken part of the corpus or of a particular website which the texts were downloaded from. (more…) -

salience [ statistics ]

a statistical measure of the significance of a specific token in the given context. This is measured with logDice, for more information, see section 3 of Statistics used in Sketch Engine) -

search attribute

the attribute that is used for the search and creating a word list. You can have the word list of words, lemmas, tags, etc. -

search span

the number of tokens either side of the node that will be matched for filtering concordance. The set search span from -5 to 5 means filter all concordance lines which containing a requirement of the filter in the range of 5 tokens around the node. -

segment

Segments refer to the parts into which a parallel (multilingual) corpus is divided for the purpose of alignment. Alignment means that the corpus contains information about which segment in one language is a translation of which segment in another language. Segments typically correspond to sentences but some corpora can be aligned at a paragraph or document level. The shorter the segments, the easier is to locate the translated word or phrase in the segment. -

simple maths [ statistics ]

The simple maths formula is used to calculate the keyness score in Sketch Engine. This score is used to identify terms, keywords and also key n-grams and key collocations. It identifies items which appear more frequently in the focus corpus than in the reference corpus. It uses relative (per million) frequencies and, therefore, makes it possible to contrast corpora of unequal sizes. see Simple maths. -

stem [ attribute ]

A stem is a part of a word without its affixes (suffixes, prefixes, etc.). Stems do not have to be valid word forms, e.g. stem hav for the word form having, in comparison to lemma have for the word form having. Stems are used instead of lemmas or in addition to lemmas with languages whose morphology requires it. An example are agglutinating languages such as Turkish, Hungarian or Japanese. -

stemming

stemming is the process during which a word reduces its affixes (suffixes, prefixes, etc.) and finally, the stem only remains. Stemming is used to detect related words with the same stem, the word root which does not change in any case, number or tense. The word stems are available in Portuguese corpus ptTenTen or Turkis corpus trTenTen. This analysis is processed with tools calle stemmers. Stemming is also used instead of lemmatization with aglutinating langauges such as Hungarian or Turkish. See also PoS tagger lemmatization -

structure

a corpus structure refers to the segments or parts into which a corpus can be divided. Typically, a corpus is divided into sentences, paragraphs and documents but the corpus author can introduce various other structures to allow the analysis to focus on smaller or larger parts of the corpus. see a list of common corpus structures see Dividing a corpus into smaller parts and annotating them -

subcorpus

a corpus can be subdivided into an unlimited number of parts called subcorpora. Subcorpora can be used to divide the corpus by the type (fiction, newspaper), media (spoken, written) or time (e.g. by years) or by any other criteria. A subcorpus can also be created from a concordance by including all concordance lines and the documents they come from into a subcorpus. A subcorpus can be selected on the advanced tab of most of the tools (except for word sketch differences and thesaurus). Selecting a corpus will restrict the search or the analysis to only this subcorpus. How to create a subcorpus» -

T-score [ statistics ]

T-score expresses the certainty with which we can argue that there is an association between the words, i.e. their co-occurrence is not random. The value is affected by the frequency of the whole collocation, which is why very frequent word combinations tend to reach a high T-score despite not being significant collocations. (more…) -

tag [ attribute ]

(also called part-of-speech tag, POS tag or morphological tag) is a label assigned to each token in an annotated corpus to indicate the part of speech and grammatical category. The tool used to annotate a corpus is called a tagger. A collection of tags used in a corpus is called a tagset. The most frequently used tags in a corpus are listed on the corpus information page with a link to the complete tagset. Our blog post on POS tags explains how they work. -

tagset

(called also tag set) is a list of part-of-speech tags used in one corpus. In Sketch Engine, corpora in the same language tend to use the same tagset but exceptions exist. To check the tagset used, access Corpus statistics and details. See our blog about POS tags. -

TBL

application in Sketch Engine for collecting usage-example sentences to build dictionaries. Find more on the Tick Box Lexicography page -

term

Terms is a concept used in connection with Keywords & Terms tool. A term is a multi-word expression (consisting of several tokens) which appears more frequently in one corpus (focus corpus) compared to another corpus (reference corpus) and, at the same time, the expression has a format of a term in the language. (more…) -

term base

In connection with CAT tools, a term base is a database of subject-specific terminology and other lexical items which need to be translated consistently. The CAT tool uses the term base to check the consistency of translation, to look for untranslated segments, and to suggest (or automatically supply) translations of the terms from the database. -

term extraction

the process of identifying subject specific vocabulary in a subject specific text usually using specialized software. The identification of one-word and multi-word terms in Sketch Engine is based on the comparison of the frequency of such words and phrases between the reference corpus and the focus corpus. compare keywords related topics term extraction explained (blog) term grammar reference corpus focus corpus -

term grammar

A term grammar is a set of rules written in CQL which define the lexical structures, typically noun phrases, which should be included in term extraction. The lexical structures are defined using POS tags and CQL. The use of a term grammar ensures a clean term extraction result which requires very little post editing. (more…) -

text analysis [ text-analysis ]

text analysis (also content analysis or text analytics) is a method for analyzing (usually unstructured) text in order to extract information. The result of the text analysis is structured data. In addition to the traditional tools, Sketch Engine also offers some unique features. The traditional tools consist of various frequency-based statistics:- word or lemma frequency, part-of-speech frequency via the wordlist tool

- bigram, trigram, n-gram frequencies via the n-gram tool

- absolute frequencies, relative frequencies, document frequencies, average reduced frequency (AFR)

- phrase and multiword frequency via the concordance

- high-quality keyword and term extraction enhanced with reference data and suitable for topic modelling

- co-occurrence analysis using linguistic criteria via the word sketch

-

text mining [ text-analysis ]

text mining is an automatic process of extracting information from text, such as keywords of a text or its source(s). The corresponding tools in Sketch Engine are WebBootCaT for creating corpora from the web or keywords and terms extraction which finds terminology in your texts. Read about other text analysis tools. -

text type

[We follow Biber (1989) in using text type as a generic term for the many ways in which a text might be classified.] A text type refers to values assigned to structures (e.g. documents, paragraphs, sentences or others) inside a corpus. Text types can refer to the source (newspaper, book, etc.), medium (spoken, written), time (year, century), or any other type of information about the text. (more…) -

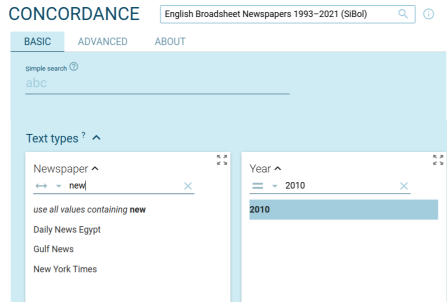

text type selector

Any search in Sketch Engine can be limited to certain text types only. The results will be taken from documents annotated with the specific text type(s). Users can include metadata in their corpora. If the metadata are in the required format, they will be converted to text types and will appear in the text type selector. The text type selector can be found either in the BASIC tab (concordance), or the ADVANCED tab (wordlist, thesaurus, word sketches, ...). Read more about text types

Read more about text types -

TMX – Translation Memory eXchange format

Translation Memory eXchange (TMX) is a specific XML format used for creating parallel corpora in Sketch Engine. This format is standardly used in translation memories (TM). See more about Setting up parallel corpora in Sketch Engine. (more…) -

token

A token is the smallest unit that a corpus consists of. A token normally refers to:- a word form: going, trees, Mary, twenty-five…

- punctuation: comma, dot, question mark, quotes…

- digit: 50,000…

- abbreviations, product names: 3M, i600, XP, FB…

- anything else between spaces

-

tokenization

Tokenization is the automatic process of separating text into tokens. This process is performed by tools called tokenizers. -

tokenizer

-

translation memory

A translation memory is a database inside a CAT tool which holds segments of text translated in the past. The CAT tool can suggest (or automatically supply) translations based on matching text from the translation memory. -

trends

Trends is a feature used for diachronic analysis, i.e. for identifying how the frequency of the word (or other attributes) changes over time. read more -

Type/token ratio (TTR)

The type/token ratio, often shortened TTR, is a simple measure of lexical diversity. It can only be interpreted when comparing it to TTR of a different text (corpus). The corpus with a higher TTR contains a higher variety of words than the other corpus. In other words, the authors use more different words, or richer vocabulary, than the authors of the texts in the other corpus. (more…) -

UMS

feature available to users with local installation for the administration of users and corpora. -

user corpus [ corpus-types ]

a corpus created by a user. Users can create corpora by uploading their own data or using Sketch Engine to collect data from the Web. User corpora are created as private. No other user can access them. However, users can grant access to the corpus to individually selected users. This is called sharing. User corpora can never be made completely public. see also Create a corpus by uploading files Create a corpus from the web Share a corpus with other users -

vertical file

A vertical file is a text file where each token (or word) is on a separate line. This format is typically used for text corpora and may contain additional metainformation (annotation). Vertical files are usually created from a prevertical format. (more…) -

web mining [ text-analysis ]

web mining is the application of data mining which extracts information from texts. The web mining is focused on gaining information and metadata from the web. For this task, Sketch Engine uses the fully-automated tool WebBootCaT for creating corpora from the web which stores also metadata of processed websites. Read about other text analysis tools. -

Word

Note: This entry is for the type of token. For the positional attribute, see word form. A word is a type of token. All tokens in a corpus are divided into two groups: words and nonwords. Words are tokens which begin with a letter of the alphabet. Tokens such as book, working, Mary, T-shirt, post-1945, mp3 or CO2 are words because they start with a letter. The regular expression Sketch Engine users to identify words is [[:alpha:]].* Compare to nonword. -

word form [ attribute ]

ⓘ This entry is for the positional attribute: word form, lemma, lowercase, tag… For the type of token, the opposite of nonword, see word. The word form (often shortened to word in the interface) is a positional attribute. It refers to one of the word forms that a lemma can take, e.g. the lemma go can take these word forms go, went, gone, goes, going. (more…) -

word list

A word list is a generic name for various types of lists such as list of words, lemmas, POS tags or other attributes with their frequency (hit counts, document counts or others). -

word sketch

The word sketch is a tool to display collocations (=word combinations) in a compact, easy-to-understand way. The word sketch makes it easy to understand how a word behaves, which contexts it typically appears in and which words it can be used together. (more…) -

Word Sketch grammar

Word Sketch grammar (WSG) is a set of rules defining the grammatical relations (=columns/categories) in a Word Sketch. In other words, WSG tells Sketch Engine which words should be regarded as collocations of the search word and also what type of collocation they are. (more…) -

Word sketch triple

A word sketch triple is a data format used for representing one collocation identified by the word sketch. A word sketch triple consists of:- node as lempos

- name of the grammatical relation as displayed in the header of the column in word sketch interface

- collocate as lempos.

school-n modifiers of "%w" secondary-j

(more…)